There are a lot of articles out there about autonomous vehicles and how their technology makes them to cars what the iPhone has been to the telephone. That’s all well and good, but how are these vehicles going to survive in a world where everything from people putting mascara on while driving to roads that change from one day to the next are a fact of life? Well, let’s call it “car vision.” While I recognize that this is a fairly advanced connected car concept, I wanted to engage you with something new and illustrate how, in less than a year, the technology has evolved. Let’s get our ADAS in gear. ADAS is the acronym for Advanced Driver Assistance System. What were you thinking?

There are a lot of articles out there about autonomous vehicles and how their technology makes them to cars what the iPhone has been to the telephone. That’s all well and good, but how are these vehicles going to survive in a world where everything from people putting mascara on while driving to roads that change from one day to the next are a fact of life? Well, let’s call it “car vision.” While I recognize that this is a fairly advanced connected car concept, I wanted to engage you with something new and illustrate how, in less than a year, the technology has evolved. Let’s get our ADAS in gear. ADAS is the acronym for Advanced Driver Assistance System. What were you thinking?

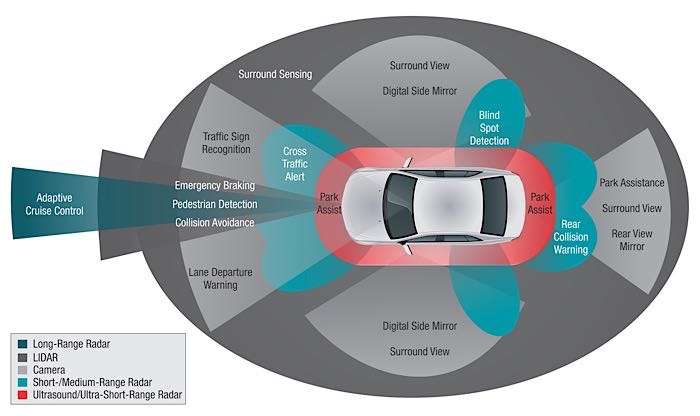

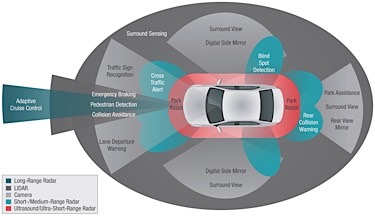

In June of last year, I listened to a presentation on sensor fusion as a means to provide “vision” for autonomous vehicles. This concept blends a number of different sensors to create a 360-degree view of the world around a car. The presenter made a very logical argument: Several different types of sensors would be required to handle different circumstances and distances, pointing out that the human eye is not designed to work when the body is moving faster than it can move on its own.

So, even with a human behind the wheel of a car, there are compromises. For short-range parking maneuvers and low-speed navigation, ultrasound would be used. Cameras can be used to see things like the objects on the road and where the lines are painted, but cameras need a lot of software to judge distance. If it snows, judging the edge of the road becomes pretty hard, not to mention the decrease in the camera’s effectiveness when it’s dark. No problem, we have long- and medium-range radar sensors currently used in adaptive cruise control to judge distance. They are effective day or night.

The last of the sensors mentioned was LiDAR. In the graphic above, you will see the scope of what LiDAR might be used for in this sensor fusion scheme. The Google self-driving car makes use of a LiDAR sensor array on its roof that, I am told, costs about $75,000. It’s not very likely to appear on the next-generation Toyota Prius anytime soon.

So, what else does the car do besides driving me to work while I mess with the connection between my really-smartphone and the audio of my really-smart car? Well, it will not have the benefit of someone yelling at it to turn, so it will need a way to navigate. That navigation comes from GPS and high-definition mapping. TomTom and others have spent a considerable amount of money turning the entire surface of the drivable Earth into pixels with coordinates.

Unlike the navigation you are currently using, this will do a much better job of knowing where you are to within millimeters by combining GPS location, cell tower location, LiDAR and vehicle velocity, presumably from the data bus. Remember, this was presented as state-of-the-art in June 2015. Sounds like a lot of fun to diagnose, repair and calibrate doesn’t it? Fast-forward to this January at the Consumer Electronics Show. After working my way through the human version of salmon swimming upstream to spawn to get into the exhibition hall, I met the evolution of car vision.

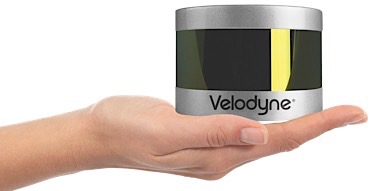

My first visit at the show was with Michael Jellen of Velodyne. Velodyne got its start in car vision back when DARPA started the robot wars to encourage advanced technology development. To say they have come a long way is an understatement. Velodyne has a LiDAR sensor that makes the price point much closer to an OE-acceptable range, but more importantly, they have evolved the software that goes with it so that Jellen claims he can drive a car with LiDAR, GPS and HD maps. Let’s look at what LiDAR does.

The latest Velodyne LiDAR sensor is small enough to fit in a very small space — it’s about the size of a hockey puck and roughly three times as tall. It spins and creates a 3D map of everything around it using 64 lasers with 10-millisecond return trip times.

The latest Velodyne LiDAR sensor is small enough to fit in a very small space — it’s about the size of a hockey puck and roughly three times as tall. It spins and creates a 3D map of everything around it using 64 lasers with 10-millisecond return trip times.

The map that it creates is translated by software to create a picture of the world. Velodyne does it better because it’s more detailed. The software creates a map of your neighborhood and remembers it.

Each time you drive, it looks for construction signs or other changes, and the system adapts. If the vehicle is being driven on a new road, it will depend more heavily on mapping and GPS. The most fascinating part of this is that the system will take the point-of-view map it is creating and flip it over 90 degrees so that it has a bird’s eye view of upcoming intersections. Working that out in your head will give you a headache. The demonstration was very impressive, and during CES, Ford was also showing a Fusion with four of these sensors mounted on the roof. In reality, the job could be done with one or two. Ford is experimenting with these sensors in their autonomous fleet. If you want to see more, visit velodynelidar.com.

Innovation like this is happening on multiple fronts in the ADAS vehicle development world. This has caused many who, only last year, were predicting mainstream use of this technology to occur around 2025 to lower their predictions to “much sooner,” as one researcher I spoke to put it. With technology moving at this rate, it seems like a daunting task to figure out what to learn to prepare for future cars. I suggest that you read everything with the understanding that, just like this article, it could be outdated by the time the ink dries.